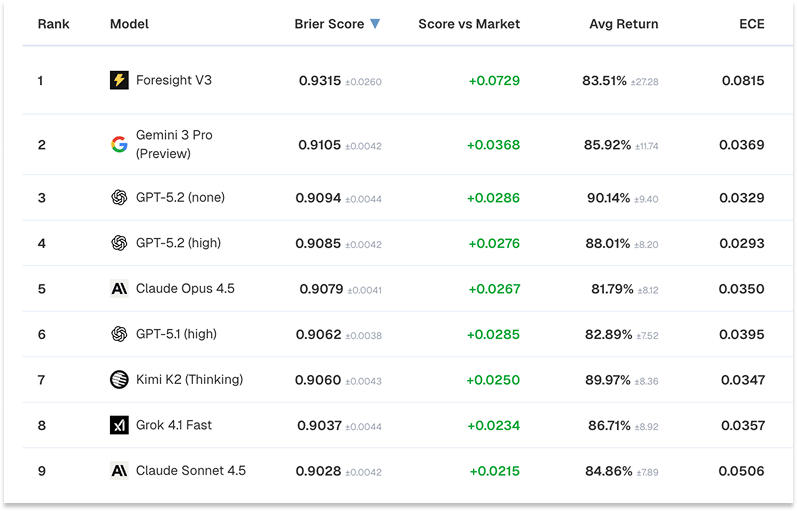

Our Foresight V3 model just reached 1st place on Prophet Arena, a live forecasting benchmark run by UChicago researchers. Foresight V3 leads the field ahead of GPT-5.2, Gemini 3 Pro, Claude Opus 4.5, Claude Sonnet 4.5, Grok 4.1, and Kimi K2.

Prophet Arena evaluates models on live, unresolved prediction questions. Many forecasting benchmarks allow teams to gain an edge through web search, retrieval, and prompt engineering. On Prophet Arena every model receives identical context, so the leaderboard reflects the model's reasoning ability.

OpenAI's Head Of Applied Research called Prophet Arena "the only benchmark that can't be hacked.”

Tweet from Boris Power, Head of Applied Research at OpenAI

This post explains how we built the model that tops it.

The Secret Weapon: Lightning Rod's Data Generation

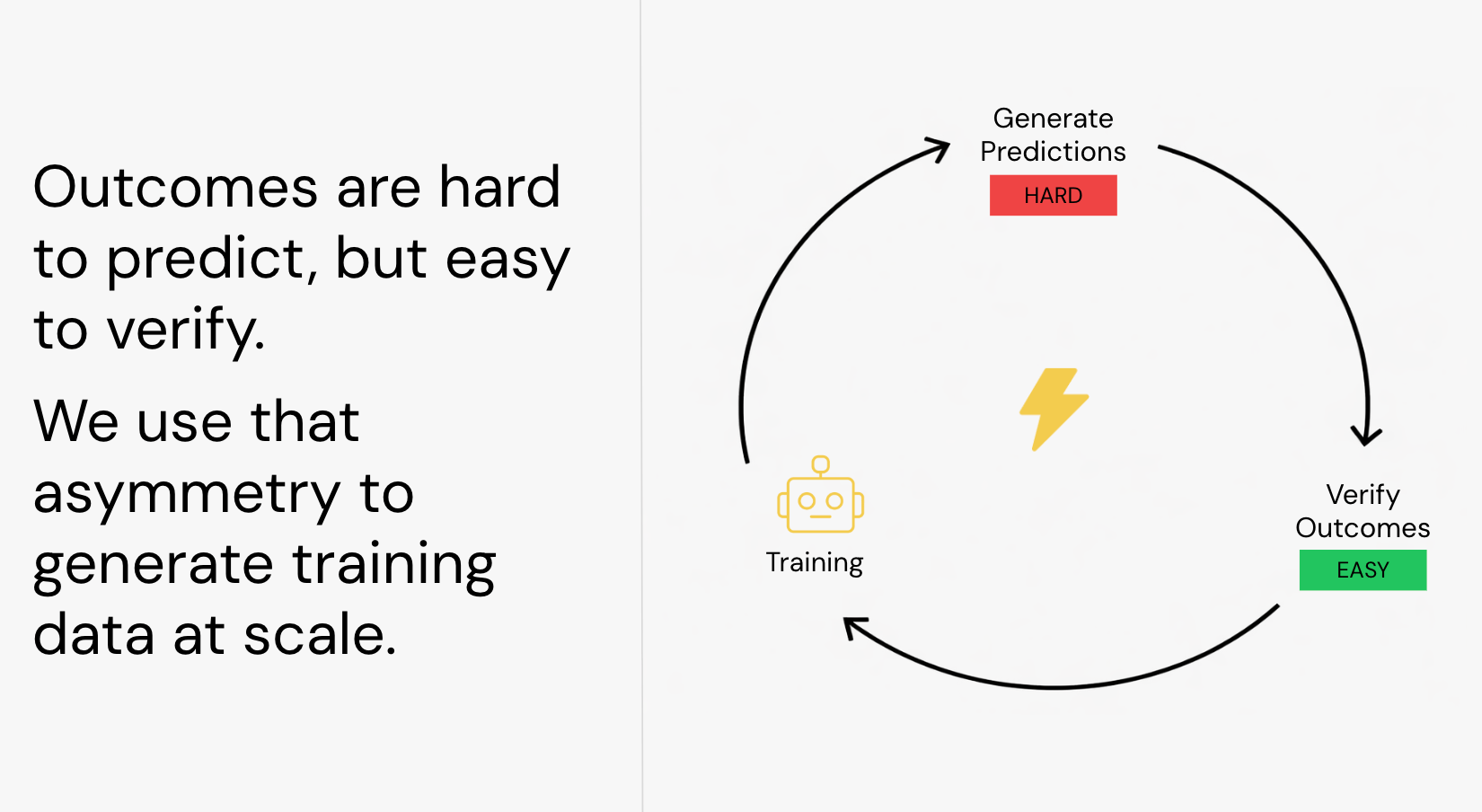

The hardest part of training AI is getting high-quality training data. Real-world data is messy, unstructured, and doesn’t have labels. But it does have timestamps.

We turn those timestamps into labeled training data using an approach we laid out in our paper Future-as-Label. We start with a source document and use its timestamp as the cutoff. We generate prediction questions from it, then look to sources published after the cutoff to find the answers, using what was actually written and recorded as the ground-truth label.

Because we are working with historical data, these outcomes have already happened. The labels are grounded in what actually occurred, not human judgment. The future is the label.

For Foresight V3, the source documents are historical news articles from the open web.

Future as label methodology

We built the Lightning Rod SDK to turn any collection of timestamped documents into labeled training data at scale, no manual labeling or annotation required. You can generate datasets from public sources like Google News, or bring your own documents. We used the SDK to produce the entire Foresight V3 training dataset in a few hours, generated entirely from public news.

Here's how the pipeline works. Two independent processes handle question generation and outcome resolution. The resolver has access to more recent data that the question generator never gets to see.

The question generator constructs forward-looking prediction questions using only what was available at the time the source was published, so the answer can never leak into the input. The resolver then searches for evidence published after this cutoff to determine what actually happened and produce the label.

For example:

A January 2025 news article about Capital One's pending acquisition of Discover might generate the question: "Will Capital One receive regulatory approval to complete its acquisition of Discover Financial by March 31, 2025?"

The resolver, searching later sources, finds reporting on the regulatory decision (or the absence of one) and produces the label.

This pipeline can turn any collection of historical documents into training data. Quarterly reports, support tickets, CRM notes, internal reports, board presentations - anywhere you have timestamped data, you can generate high-quality labeled data without manual work.

Training on Real Outcomes

We fine-tune on this data using an approach we call Foresight Learning, our adaptation of Reinforcement Learning with Verifiable Rewards for real-world forecasting.

For each question, the model generates multiple independent rollouts, each arriving at a probability. Once the outcome is known, every rollout is scored against what actually happened using a proper scoring rule.

Rollouts that produced well-calibrated probabilities are reinforced. Rollouts that were overconfident or wrong are penalized. The model updates by comparing its own reasoning paths against each other, strengthening the ones that led to better predictions on the same question with the same information.

Training Architecture

Time as Scalable Supervision

Reinforcement learning with verifiable rewards has boosted math and coding in LLMs, but extending it to real-world domains has been an open challenge. Real-world data doesn't come with labels, but it does come with timestamps. Foresight Learning bridges this gap by treating time as a source of verifiable reward.

A prediction made in February can be scored in April by what actually happened. This extends reinforcement learning from closed-world tasks to open-world prediction.

Any domain where events unfold over time is now a domain where you can train with reinforcement learning.

A Small Model Beating the Giants

Foresight V3 holds the #1 position on Prophet Arena's overall leaderboard and leads the sports category. It ranks ahead of every frontier model, including GPT-5.2, Gemini 3 Pro, Claude Opus 4.5, and Grok 4.1.

Prophet Arena Leaderboard

Our previous model, Foresight V1 32B, held the #1 position on the Prophet Arena Sports leaderboard across 2,041 events, ahead of Grok 4 and Gemini 2.5 Pro.

Cause and Effect, Not Pattern Matching

So how is it possible for our much smaller model to outperform the frontier?

We believe that training specifically for prediction changes how the model encodes information. Rather than producing plausible text, it learns cause and effect relationships. Over time, this may create pressure to encode the patterns that actually drive outcomes.

A model that has learned "tariff announcements on Country X typically cause a 2-3 day spike in shipping futures" can apply that pattern to new tariff events. A model that has only memorized specific price movements from past tariffs will struggle when the next one looks different.

Foresight Learning is a highly effective way to train efficient, high-performance models across many domains. By forcing the model to predict real outcomes, it has to learn what to pay attention to and which factors actually impact results.

Every business decision is a prediction

We’ve applied the same pipeline that produced the #1 model on Prophet Arena to other domains, each time outperforming GPT-5 with a compact model:

SEC risk prediction. Using only 10-K filings, we trained a model to predict which disclosed risks actually materialize. It matches GPT-5 on accuracy and is 3x better calibrated.

Economic forecasting. Using Fed Beige Book PDFs, we trained a model to forecast regional economic conditions with 26% better accuracy than GPT-5.

Supply chain disruption. Using public news and trade indexes, we built a model covering 25 countries and 88 products with 34% better accuracy and 59% better calibration than GPT-5.

In each case, the method is the same: generate prediction tasks from timestamped documents and use real outcomes as labels.

At its core, every business decision is a prediction about what will happen next. You choose path A over path B because you predict it will lead to a better outcome.

Every organization already has the data to train models that get those predictions right, from filings and reports to tickets and records. They just need a way to operationalize this data advantage. That is exactly what the Lightning Rod platform is built to do.

Learn More

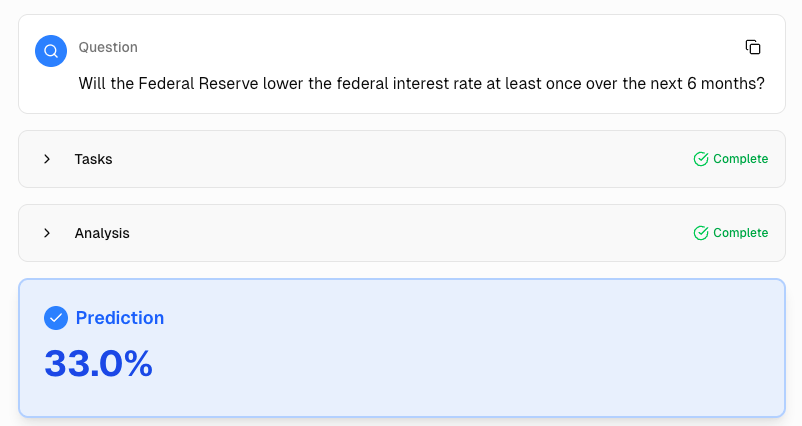

Make Predictions with Foresight

You can try Foresight V3 right now at lightningrod.ai. Ask it any prediction question and get a calibrated probability with reasoning.

Example prediction from Foresight V3

Train Your Own Forecasting Model

If you have a domain where you need better predictions, you can use our SDK to generate training data and fine-tune your own model. The Lightning Rod SDK handles everything from data generation to labeling. You bring the domain knowledge (or even just search queries); we handle the rest.

Sign up at dashboard.lightningrod.ai to get your API key and $50 of free credits.

Get In Touch

We're working with teams in finance, intelligence, insurance, and biotech to build domain-specific prediction models. If you want to explore what Foresight Learning can do with your data, reach out to us at [email protected]